developer.nvidia.com/blog/achieving-top-inference-performance-with-the-nvidia-h100-tensor-core-gpu-and-nvidia-tensorrt-llm

Preview meta tags from the developer.nvidia.com website.

Linked Hostnames

10- 32 links todeveloper.nvidia.com

- 4 links togithub.com

- 3 links towww.nvidia.com

- 1 link todocs.nvidia.com

- 1 link toforums.developer.nvidia.com

- 1 link tonvda.ws

- 1 link totwitter.com

- 1 link towww.facebook.com

Thumbnail

Search Engine Appearance

https://developer.nvidia.com/blog/achieving-top-inference-performance-with-the-nvidia-h100-tensor-core-gpu-and-nvidia-tensorrt-llm

Achieving Top Inference Performance with the NVIDIA H100 Tensor Core GPU and NVIDIA TensorRT-LLM | NVIDIA Technical Blog

Best-in-class AI performance requires an efficient parallel computing architecture, a productive tool stack, and deeply optimized algorithms.

Bing

Achieving Top Inference Performance with the NVIDIA H100 Tensor Core GPU and NVIDIA TensorRT-LLM | NVIDIA Technical Blog

https://developer.nvidia.com/blog/achieving-top-inference-performance-with-the-nvidia-h100-tensor-core-gpu-and-nvidia-tensorrt-llm

Best-in-class AI performance requires an efficient parallel computing architecture, a productive tool stack, and deeply optimized algorithms.

DuckDuckGo

Achieving Top Inference Performance with the NVIDIA H100 Tensor Core GPU and NVIDIA TensorRT-LLM | NVIDIA Technical Blog

Best-in-class AI performance requires an efficient parallel computing architecture, a productive tool stack, and deeply optimized algorithms.

General Meta Tags

11- titleAchieving Top Inference Performance with the NVIDIA H100 Tensor Core GPU and NVIDIA TensorRT-LLM | NVIDIA Technical Blog

- charsetutf-8

- x-ua-compatibleie=edge

- viewportwidth=device-width, initial-scale=1, shrink-to-fit=no

- interestGenerative AI

Open Graph Meta Tags

13- og:typearticle

og:locale

en_US- og:site_nameNVIDIA Technical Blog

- og:titleAchieving Top Inference Performance with the NVIDIA H100 Tensor Core GPU and NVIDIA TensorRT-LLM | NVIDIA Technical Blog

- og:descriptionBest-in-class AI performance requires an efficient parallel computing architecture, a productive tool stack, and deeply optimized algorithms. NVIDIA released the open-source NVIDIA TensorRT-LLM…

Twitter Meta Tags

5- twitter:cardsummary_large_image

- twitter:titleAchieving Top Inference Performance with the NVIDIA H100 Tensor Core GPU and NVIDIA TensorRT-LLM | NVIDIA Technical Blog

- twitter:descriptionBest-in-class AI performance requires an efficient parallel computing architecture, a productive tool stack, and deeply optimized algorithms. NVIDIA released the open-source NVIDIA TensorRT-LLM…

- twitter:imagehttps://developer-blogs.nvidia.com/wp-content/uploads/2023/12/Top-inference-performance-H100.png

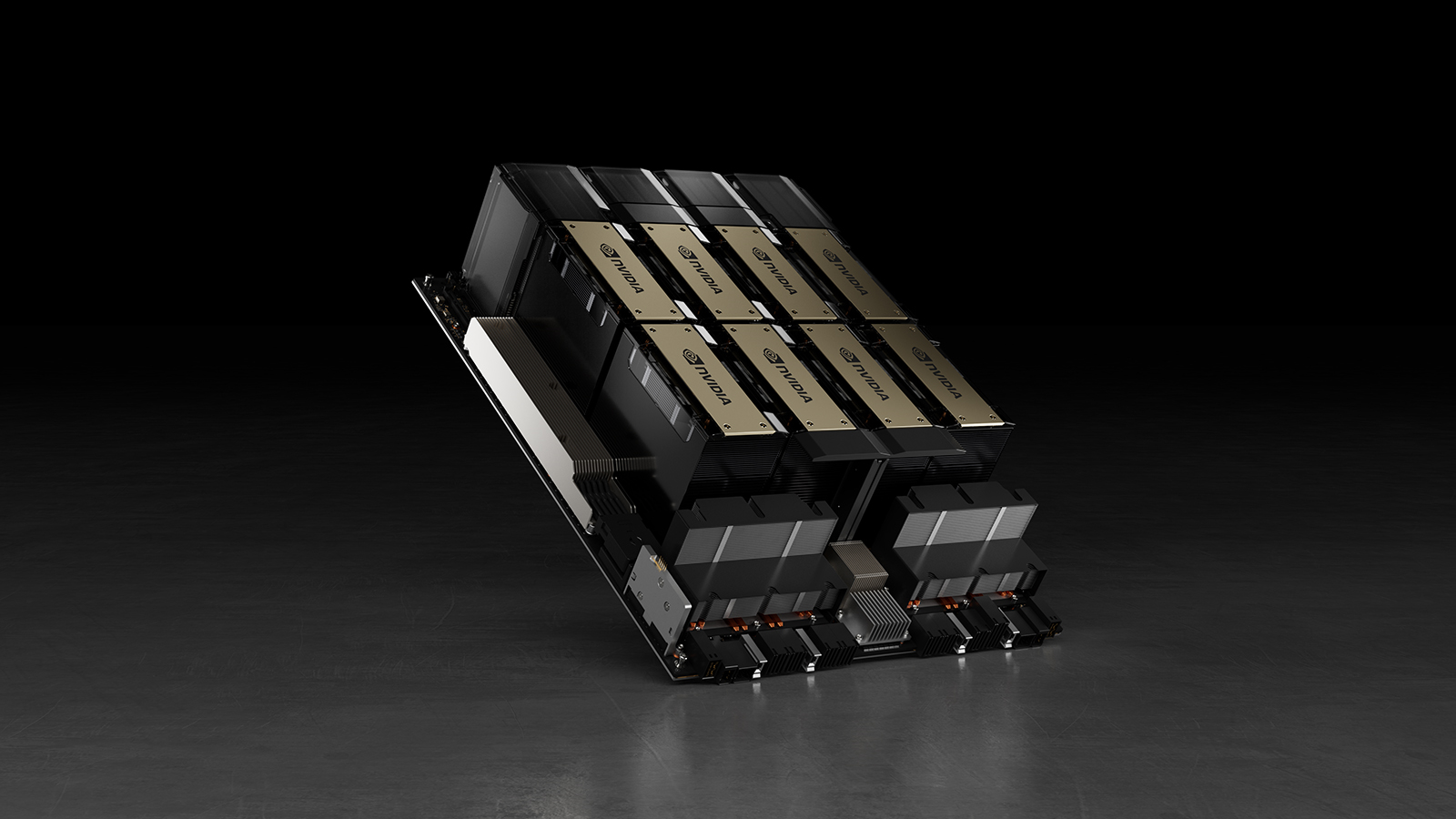

- twitter:image:altAn illustration of the NVIDIA H100.

Link Tags

29- EditURIhttps://developer-blogs.nvidia.com/xmlrpc.php?rsd

- alternatehttps://developer.nvidia.com/blog/achieving-top-inference-performance-with-the-nvidia-h100-tensor-core-gpu-and-nvidia-tensorrt-llm/feed/

- alternatehttps://developer-blogs.nvidia.com/wp-json/wp/v2/posts/75194

- alternatehttps://developer-blogs.nvidia.com/wp-json/oembed/1.0/embed?url=https%3A%2F%2Fdeveloper.nvidia.com%2Fblog%2Fachieving-top-inference-performance-with-the-nvidia-h100-tensor-core-gpu-and-nvidia-tensorrt-llm%2F

- alternatehttps://developer-blogs.nvidia.com/wp-json/oembed/1.0/embed?url=https%3A%2F%2Fdeveloper.nvidia.com%2Fblog%2Fachieving-top-inference-performance-with-the-nvidia-h100-tensor-core-gpu-and-nvidia-tensorrt-llm%2F&format=xml

Website Locales

2en

https://developer.nvidia.com/blog/achieving-top-inference-performance-with-the-nvidia-h100-tensor-core-gpu-and-nvidia-tensorrt-llm/ko

https://developer.nvidia.com/ko-kr/blog/achieving-top-inference-performance-with-the-nvidia-h100-tensor-core-gpu-and-nvidia-tensorrt-llm/

Emails

1- ?subject=I'd like to share a link with you&body=https%3A%2F%2Fdeveloper.nvidia.com%2Fblog%2Fachieving-top-inference-performance-with-the-nvidia-h100-tensor-core-gpu-and-nvidia-tensorrt-llm%2F

Links

46- https://developer.nvidia.com

- https://developer.nvidia.com/blog

- https://developer.nvidia.com/blog/accelerating-gpu-analytics-using-rapids-and-ray

- https://developer.nvidia.com/blog/author/aeassa

- https://developer.nvidia.com/blog/author/dsalvator