dorverbin.github.io/refnerf

Preview meta tags from the dorverbin.github.io website.

Linked Hostnames

13- 2 links tostorage.googleapis.com

- 1 link toarxiv.org

- 1 link tobmild.github.io

- 1 link todorverbin.github.io

- 1 link togithub.com

- 1 link toiaifi.org

- 1 link tojonbarron.info

- 1 link tomgharbi.com

Thumbnail

Search Engine Appearance

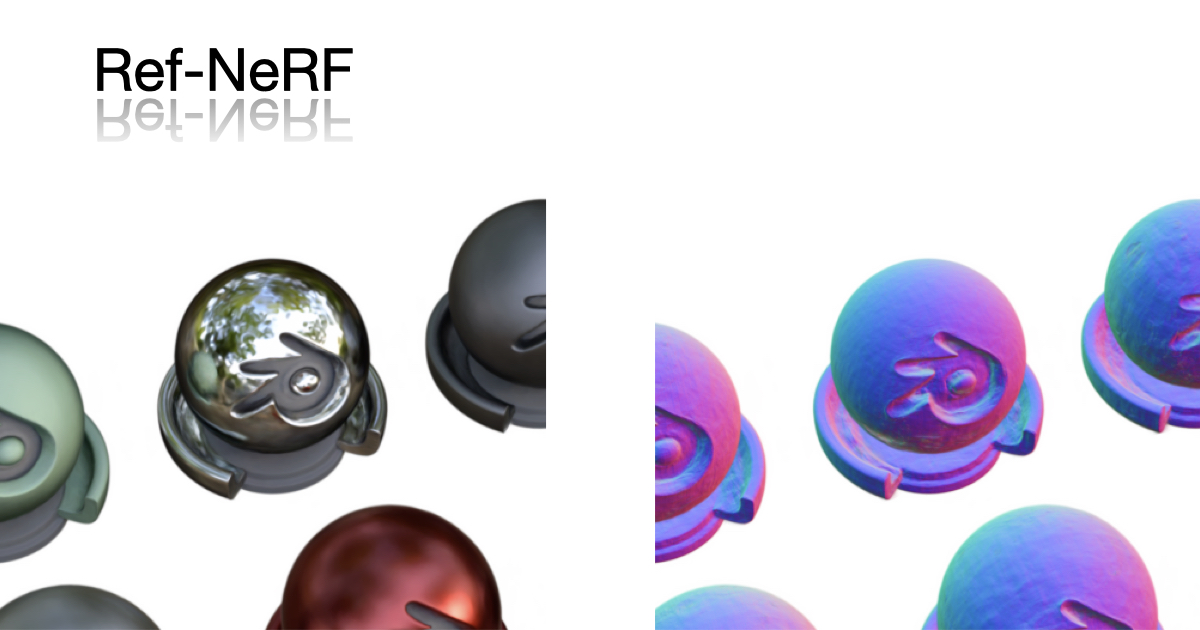

Ref-NeRF: Structured View-Dependent Appearance for Neural Radiance Fields

Neural Radiance Fields (NeRF) is a popular view synthesis technique that represents a scene as a continuous volumetric function, parameterized by multilayer perceptrons that provide the volume density and view-dependent emitted radiance at each location. While NeRF-based techniques excel at representing fine geometric structures with smoothly varying view-dependent appearance, they often fail to accurately capture and reproduce the appearance of glossy surfaces. We address this limitation by introducing Ref-NeRF, which replaces NeRF's parameterization of view-dependent outgoing radiance with a representation of reflected radiance and structures this function using a collection of spatially-varying scene properties. We show that together with a regularizer on normal vectors, our model significantly improves the realism and accuracy of specular reflections. Furthermore, we show that our model's internal representation of outgoing radiance is interpretable and useful for scene editing.

Bing

Ref-NeRF: Structured View-Dependent Appearance for Neural Radiance Fields

Neural Radiance Fields (NeRF) is a popular view synthesis technique that represents a scene as a continuous volumetric function, parameterized by multilayer perceptrons that provide the volume density and view-dependent emitted radiance at each location. While NeRF-based techniques excel at representing fine geometric structures with smoothly varying view-dependent appearance, they often fail to accurately capture and reproduce the appearance of glossy surfaces. We address this limitation by introducing Ref-NeRF, which replaces NeRF's parameterization of view-dependent outgoing radiance with a representation of reflected radiance and structures this function using a collection of spatially-varying scene properties. We show that together with a regularizer on normal vectors, our model significantly improves the realism and accuracy of specular reflections. Furthermore, we show that our model's internal representation of outgoing radiance is interpretable and useful for scene editing.

DuckDuckGo

Ref-NeRF: Structured View-Dependent Appearance for Neural Radiance Fields

Neural Radiance Fields (NeRF) is a popular view synthesis technique that represents a scene as a continuous volumetric function, parameterized by multilayer perceptrons that provide the volume density and view-dependent emitted radiance at each location. While NeRF-based techniques excel at representing fine geometric structures with smoothly varying view-dependent appearance, they often fail to accurately capture and reproduce the appearance of glossy surfaces. We address this limitation by introducing Ref-NeRF, which replaces NeRF's parameterization of view-dependent outgoing radiance with a representation of reflected radiance and structures this function using a collection of spatially-varying scene properties. We show that together with a regularizer on normal vectors, our model significantly improves the realism and accuracy of specular reflections. Furthermore, we show that our model's internal representation of outgoing radiance is interpretable and useful for scene editing.

General Meta Tags

5- titleRef-NeRF

- Content-Typetext/html; charset=UTF-8

- x-ua-compatibleie=edge

- description

- viewportwidth=device-width, initial-scale=1

Open Graph Meta Tags

8- og:imagehttps://dorverbin.github.io/refnerf/img/refnerf_titlecard.jpg

- og:image:typeimage/png

- og:image:width1200

- og:image:height630

- og:typewebsite

Twitter Meta Tags

4- twitter:cardsummary_large_image

- twitter:titleRef-NeRF: Structured View-Dependent Appearance for Neural Radiance Fields

- twitter:descriptionNeural Radiance Fields (NeRF) is a popular view synthesis technique that represents a scene as a continuous volumetric function, parameterized by multilayer perceptrons that provide the volume density and view-dependent emitted radiance at each location. While NeRF-based techniques excel at representing fine geometric structures with smoothly varying view-dependent appearance, they often fail to accurately capture and reproduce the appearance of glossy surfaces. We address this limitation by introducing Ref-NeRF, which replaces NeRF's parameterization of view-dependent outgoing radiance with a representation of reflected radiance and structures this function using a collection of spatially-varying scene properties. We show that together with a regularizer on normal vectors, our model significantly improves the realism and accuracy of specular reflections. Furthermore, we show that our model's internal representation of outgoing radiance is interpretable and useful for scene editing.

- twitter:imagehttps://dorverbin.github.io/refnerf/img/refnerf_titlecard.jpg

Link Tags

5- icondata:image/svg+xml,<svg xmlns=%22http://www.w3.org/2000/svg%22 viewBox=%220 0 100 100%22><text y=%22.9em%22 font-size=%2290%22>%E2%9C%A8</text></svg>

- stylesheetcss/bootstrap.min.css

- stylesheetcss/font-awesome.min.css

- stylesheetcss/codemirror.min.css

- stylesheetcss/app.css

Links

14- http://bmild.github.io

- http://iaifi.org

- http://mgharbi.com

- http://www.eecs.harvard.edu/~zickler/Main/HomePage

- https://arxiv.org/abs/2112.03907